In the dynamic digital landscape, continuous optimization is key to success, and at the heart of this optimization lies a powerful technique known as A/B testing. This method allows businesses to make data-driven decisions, moving beyond mere intuition to understand exactly what resonates with their audience. It’s a fundamental process for anyone looking to refine digital experiences and boost performance. So, what is A/B testing? A comprehensive guide like this will delve into its intricacies.

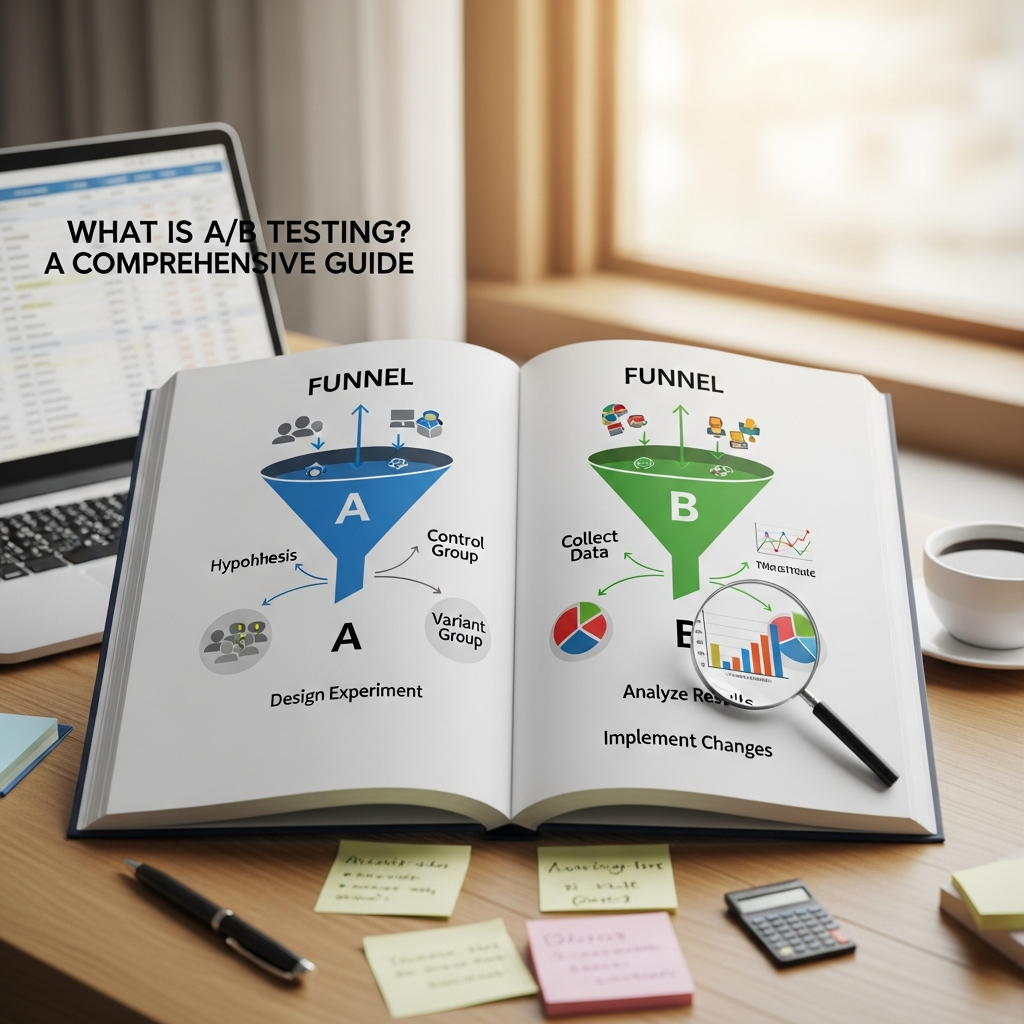

A/B testing, often referred to as split testing, is a controlled experiment that compares two versions of a digital asset—such as a webpage, email, or application—to determine which one performs better against a specific goal. Users are randomly divided, with one group (the control, Version A) experiencing the original, and the other (the variant, Version B) interacting with the modified version. By analyzing the results, businesses can confidently identify which changes lead to improved outcomes.

Unpacking the Fundamentals: What Exactly Is A/B Testing? A Comprehensive Guide to Its Core Concepts

At its core, A/B testing is a scientific approach to understanding user behavior and optimizing digital content. It’s about systematically experimenting with different elements to see which variations yield superior results. This methodology isn’t just a simple comparison; it involves careful planning, execution, and analysis to ensure reliable and actionable insights. Understanding what is A/B testing, a comprehensive guide will reveal, is crucial for any digital strategy.

The process begins by identifying a single element you wish to test, such as a headline, an image, a call-to-action button, or even an entire page layout. The original version is known as the “control” (A), and the modified version is the “variant” (B). Traffic is then split between these two versions, typically in equal proportions, though this can be adjusted. As users interact with each version, relevant data points are collected and analyzed to see which version drives better performance against predetermined metrics. This systematic comparison provides clear, empirical evidence of what works and what doesn’t, allowing for informed decision-making.

For example, an e-commerce company might test two versions of a product page: Version A with a “BUY NOW” button and Version B with an “ADD TO CART” button. By tracking which button leads to more completed purchases, the company can make a data-driven decision to implement the more effective option across all product pages. This directly demonstrates what is A/B testing, a comprehensive guide to practical application.

Why A/B Testing Is Indispensable: The Benefits of a Data-Driven Approach

The importance of A/B testing cannot be overstated in today’s competitive digital landscape. It offers numerous advantages that empower businesses to enhance their online presence, improve user experiences, and ultimately drive growth. One of the primary reasons to embrace what is A/B testing, a comprehensive guide reveals, is its ability to foster data-driven decision-making, moving away from assumptions and intuition.

A significant benefit is improved conversion rates. Whether the goal is to increase email sign-ups, product purchases, or click-through rates, A/B testing allows for the refinement of strategies by identifying which elements resonate most effectively with the audience. Even minor adjustments, such as tweaking a headline or changing the color of a call-to-action button, can lead to substantial improvements in performance. This iterative process of testing and optimizing ensures that digital assets are continuously refined to maximize their effectiveness.

Beyond conversions, A/B testing plays a vital role in enhancing the customer experience. By understanding how users interact with different layouts, content, and features, businesses can tailor their online presence to better suit their target audience’s preferences. This leads to a smoother, more intuitive user journey, making it easier for visitors to navigate, complete tasks, and find relevant information. A well-optimized user experience, validated through rigorous testing, directly contributes to higher engagement and satisfaction.

Furthermore, A/B testing reduces the risk associated with changes. Rather than implementing major updates based on assumptions that could potentially harm performance, A/B testing allows businesses to test smaller changes and gather insights before committing resources on a large scale. This minimizes the chances of making costly mistakes and ensures that any deployed changes are proven to have a positive impact. It’s a strategic process where combining data with intuition leads to more successful outcomes.

A/B testing also leads to a deeper understanding of the audience. By observing how different segments of users respond to various variations, businesses can identify patterns in behavior and refine their marketing strategies accordingly. For instance, a test might reveal that a younger demographic prefers a modern web design, while older users respond better to a classic, straightforward layout. This granular insight enables personalized experiences and more targeted communication, ultimately leading to greater engagement and loyalty. This holistic approach underscores the value of knowing what is A/B testing, a comprehensive guide to customer insight.

Finally, this methodology helps businesses allocate resources effectively and increase return on investment (ROI). By focusing time, budget, and effort on strategies that have been empirically proven to deliver results, companies can avoid wasting resources on ineffective initiatives. It maximizes the efficiency of existing traffic, leading to higher revenue without necessarily increasing acquisition costs. The ongoing nature of A/B testing ensures that strategies remain flexible and adapt to evolving customer preferences and market trends.

The Step-by-Step Process: What Is A/B Testing? A Comprehensive Guide to Implementation

Implementing an A/B test effectively requires a structured approach. It’s not simply about throwing two versions against a wall to see what sticks; it involves careful planning, execution, and analysis. To truly grasp what is A/B testing, a comprehensive guide will walk you through each crucial step of the process.

Defining Your Hypothesis

Every successful A/B test begins with a clear, testable hypothesis. This is an educated guess about why a particular change will lead to a specific improvement. A strong hypothesis typically follows an “If… then… because…” structure. For instance: “If we change the call-to-action button text from ‘Submit’ to ‘Get Started,’ then more users will click the button, because ‘Get Started’ implies a clearer, lower-commitment next step.” Without a clear hypothesis, it’s easy to run an experiment without a focused objective, leading to ambiguous results.

The hypothesis should be based on existing data, user feedback, or observed pain points in the customer journey. For example, if analytics show a high drop-off rate on a particular form, the hypothesis might focus on simplifying the form fields. Clearly defining your hypothesis ensures that the test has a purpose and that you know what success looks like. This initial step is fundamental to understanding what is A/B testing, a comprehensive guide to purposeful experimentation.

Identifying Your Variables

Once your hypothesis is clear, the next step is to identify the specific elements you will test. In a true A/B test, it’s paramount to focus on changing only one variable at a time. This is critical because if multiple changes are introduced simultaneously, it becomes impossible to determine which specific change caused the observed difference in performance.

Variables can include anything from minute details to larger structural elements:

- Headlines and Copy: Different wordings, lengths, or tones.

- Call-to-Action (CTA) Buttons: Changes in text, color, size, or placement.

- Images and Videos: Different visuals, their placement, or even their presence/absence.

- Page Layout and Design: Arrangement of elements, navigation menus, or form fields.

- Pricing Structures: How prices are displayed or different offers.

- Email Subject Lines: To improve open rates.

By isolating a single variable, you gain clear insights into its impact. This meticulous approach is key to reliable results in what is A/B testing, a comprehensive guide to precise measurement.

Creating Variations

With your variable identified, you create the “variant” (Version B) that incorporates the change you want to test, while keeping the “control” (Version A) as is. The variant should directly reflect your hypothesis. If you hypothesize that a green button will perform better than a blue one, your variant will feature the green button, while the control retains the blue button.

It’s important to ensure that the variations are correctly implemented and that all tracking mechanisms are in place for both versions. Technical glitches or tracking errors can skew results and invalidate the entire experiment. Quality assurance (QA) of both the control and variant is essential before launching the test to avoid any unforeseen issues. This meticulous setup ensures the integrity of what is A/B testing, a comprehensive guide to valid experimentation.

Running the Experiment

With variations ready, the experiment can be launched. This involves randomly dividing your audience and directing a portion to the control and another to the variant. Most A/B testing platforms handle this traffic distribution automatically, ensuring that users are randomly assigned to one version and consistently see that same version throughout the test to maintain data integrity.

The duration of an A/B test is crucial and depends on several factors, including traffic volume, the minimum detectable effect (MDE), and the desired statistical significance. While some suggest a minimum of two weeks, the general guideline is to run a test for at least two full business cycles (typically 2-4 weeks) to account for variations in user behavior, such as differences between weekdays and weekends, or seasonal changes. Running a test for too short a time can lead to unreliable data and premature conclusions. Conversely, running a test for too long can introduce external factors that muddy the data.

During the experiment, it’s vital to monitor for any issues but resist the temptation to stop the test early, even if initial results seem promising. Patience is a virtue in what is A/B testing, a comprehensive guide to robust data.

Analyzing Results and Taking Action

Once the test has run for a sufficient duration and collected enough data, the next step is to analyze the results. This involves comparing the performance of the control and the variant against your predefined metrics and, crucially, determining the statistical significance of the observed differences.

Statistical significance measures the likelihood that the difference observed between the control and variant is genuine and not merely due to random chance. A commonly accepted confidence level is 95%, meaning there is only a 5% chance that the results occurred randomly. If a test achieves statistical significance, you can be confident that the winning variation genuinely outperforms the control. Tools like chi-squared tests are often used to calculate this.

Beyond statistical significance, it’s also important to consider practical significance. A small, statistically significant improvement might not translate into a meaningful business impact. For example, a 0.1% increase in conversions might be statistically significant with high traffic, but its practical impact on revenue could be trivial.

Based on the analysis, you will either confirm or reject your hypothesis. If the variant is the clear winner and achieves both statistical and practical significance, it should be implemented. If the control performs better, or if the results are inconclusive, you’ve still gained valuable insights. It’s also important to document everything, even failed tests, to build a knowledge base for future optimization efforts. This continuous learning cycle is fundamental to what is A/B testing, a comprehensive guide to ongoing improvement.

Key Metrics to Track in A/B Testing

To effectively determine the success of your A/B testing efforts, it’s essential to track the right metrics. These key performance indicators (KPIs) align directly with your overall business objectives and the specific goals of each test. Understanding these metrics is vital for anyone engaging with what is A/B testing, a comprehensive guide to measurement.

Here are some of the most common and impactful metrics:

- Conversion Rate: This is arguably the most common and critical metric. It measures the percentage of users who complete a desired action, such as making a purchase, signing up for a newsletter, downloading a resource, or filling out a form. An increase in conversion rate directly indicates improved performance.

- Click-Through Rate (CTR): CTR measures the percentage of users who click on a specific element, such as a button, link, or image. This metric is particularly useful for testing the effectiveness of CTAs, headlines, or ad copy.

- Revenue Per Visitor (RPV) / Average Order Value (AOV): For e-commerce businesses, these metrics are crucial. RPV measures the average revenue generated from each visitor, while AOV tracks the average value of each transaction. Testing variations that impact pricing, product recommendations, or checkout flows can directly influence these figures.

- Bounce Rate: This metric indicates the percentage of visitors who leave a website after viewing only one page. A lower bounce rate often suggests that users are finding the content engaging and relevant, indicating an improved user experience.

- Time on Page / Session Duration: These metrics measure how long users spend on a particular page or during an entire session. Longer durations often correlate with higher engagement and interest, especially for content-heavy pages.

- Pages Per Session: This metric indicates how many pages a user views during a single visit. A higher number can suggest better navigation, more engaging content, or a more intuitive site structure.

- Form Completion Rate: For websites relying on lead generation, tracking the percentage of users who successfully complete a form is vital. A/B testing can help optimize form fields, design, and messaging to reduce friction and increase submissions.

- User Engagement (e.g., Scrolls, Video Plays): Depending on the content, tracking interactions like how far users scroll down a page, whether they play embedded videos, or interact with specific elements can provide deeper insights into content effectiveness.

By carefully selecting and tracking the most relevant metrics for each A/B test, businesses can gain a clear, quantitative understanding of which variations are truly driving improvement. This data-driven approach is a cornerstone of what is A/B testing, a comprehensive guide to actionable insights.

Common Applications of A/B Testing

The versatility of A/B testing makes it applicable across a wide range of digital assets and marketing efforts. From refining website elements to optimizing entire campaigns, this methodology provides a systematic way to improve performance. Exploring these applications helps to fully appreciate what is A/B testing, a comprehensive guide to its broad utility.

Website Optimization

This is perhaps the most well-known application. A/B testing is extensively used to optimize various components of a website, leading to better user experience and higher conversion rates.

- Landing Pages: Businesses frequently test different headlines, calls-to-action, images, form fields, and layouts on landing pages to maximize lead generation or product sign-ups. For example, testing a hero image against a video on a homepage can reveal which format reduces bounce rates and increases time on page.

- Product Pages: For e-commerce sites, A/B testing product descriptions, images, pricing displays, “Add to Cart” button designs, and customer testimonials can significantly impact purchase conversion rates.

- Navigation Menus: Simplifying menu structures or changing the wording of navigation items can improve usability and product discovery.

- Checkout Flows: Optimizing the number of steps, form fields, or payment options in a checkout process can reduce cart abandonment and increase completed transactions.

- Pop-ups and Banners: Testing different messaging, timing, or design of promotional pop-ups or banners can improve their effectiveness without alienating users.

Email Campaigns

Email marketing benefits immensely from A/B testing, as even small improvements can lead to significant gains in engagement and conversions.

- Subject Lines: Testing different subject lines is crucial for improving email open rates. A compelling subject line can drastically increase the chances of an email being read.

- Email Body Content: Variations in headlines, images, calls-to-action, personalization, and overall layout within the email body can be tested to increase click-through rates to linked content or product pages.

- Send Times: Experimenting with different send times can reveal optimal periods when the audience is most receptive to engaging with emails.

Advertising Campaigns

A/B testing is indispensable for optimizing the performance and ROI of paid advertising efforts.

- Ad Copy and Creatives: Running tests on different headlines, body text, images, or video creatives for ads across platforms like social media or search engines can identify which versions attract more clicks and higher quality leads.

- Audience Targeting: While not a direct A/B test of creative, testing different audience segments with the same ad variations can help refine targeting strategies for future campaigns.

- Landing Page Alignment: Ensuring that ad copy and the linked landing page content are consistent and relevant is critical. A/B testing combinations of ads and landing pages can improve overall campaign performance.

Product Features and User Experience (UX)

Beyond marketing, A/B testing is deeply integrated into product development to ensure new features and design changes genuinely enhance the user experience.

- New Feature Rollouts: Testing new features with a subset of users before a full launch allows product teams to gather real-world data, identify issues, and refine the feature based on user feedback.

- UI Elements: Changes to user interface elements, such as button positions, icon designs, or input fields within an application, can be tested to improve usability and task completion rates.

- Onboarding Flows: Optimizing the initial user onboarding experience can significantly impact retention and user satisfaction. A/B testing different onboarding steps or messaging can lead to more effective user adoption.

By applying A/B testing across these diverse areas, businesses can cultivate a culture of continuous experimentation and data-driven improvement, reinforcing what is A/B testing, a comprehensive guide to optimization in every digital facet.

Best Practices for Effective A/B Testing

To harness the full power of A/B testing and avoid common pitfalls, adhering to a set of best practices is essential. These guidelines ensure that your experiments yield reliable, actionable insights, driving meaningful improvements. Anyone engaging with what is A/B testing, a comprehensive guide will attest, must master these practices.

Focus on One Variable at a Time

As mentioned previously, this is a cornerstone of sound experimentation. When you test multiple elements simultaneously, like a new headline and a different button color, and see a change in performance, you won’t know which specific element was responsible for the outcome. By isolating a single variable, you gain clear, unambiguous data about its impact, allowing for precise adjustments and informed decision-making. This singular focus clarifies what is A/B testing, a comprehensive guide to isolating impact.

Ensure Statistical Significance

Statistical significance is paramount for trusting your A/B test results. It tells you the probability that the observed difference between your control and variant is not due to random chance. Most businesses aim for a 95% confidence level, meaning there’s only a 5% chance the results are random. Stopping a test prematurely, before reaching this level of confidence, can lead to acting on false positives and making ineffective or even detrimental changes. Always wait for your test to reach and ideally sustain statistical significance for a few days.

Run Tests for Sufficient Duration

The length of your A/B test is critical for collecting representative data. Rushing a test can lead to misleading results, as user behavior often varies by day of the week, time of day, and even seasonal factors. A general rule of thumb is to run tests for at least two full business cycles, typically 2-4 weeks. This duration helps account for weekly patterns and ensures that a diverse enough sample of your audience interacts with both versions. Running tests for too long, however, can also introduce issues like cookie deletion or external site changes that can corrupt data.

Avoid Premature Conclusions

It can be tempting to stop an A/B test as soon as one variation shows an early lead, especially if it aligns with your initial hypothesis. However, early leads can often be random fluctuations. Without waiting for statistical significance and a sufficient duration, you risk making decisions based on unreliable data. Patience is key; let the data mature and stabilize before drawing conclusions. This discipline is a key aspect of what is A/B testing, a comprehensive guide to patient analysis.

Document Everything

Comprehensive documentation of your A/B tests is invaluable. For each test, record:

- The hypothesis

- The variable tested

- The control and variant designs

- The target metrics

- The start and end dates

- The results, including statistical significance and practical impact

- Key learnings, even from failed tests

This creates a knowledge base that prevents repeating past mistakes, informs future test ideas, and helps onboard new team members. It’s a crucial aspect of building a culture of continuous improvement and solidifies the understanding of what is A/B testing, a comprehensive guide to institutional knowledge.

Consider External Factors

External events, holidays, promotional campaigns, or even changes in overall market trends can influence your A/B test results. Be aware of these factors and, if possible, avoid running critical tests during periods of unusual traffic or activity. If an external factor is unavoidable, try to account for its potential impact during your analysis. Maintaining data quality throughout your experiments is also vital, avoiding issues from setup errors or technical glitches.

By diligently following these best practices, businesses can maximize the effectiveness of their A/B testing efforts, leading to more accurate insights and sustained digital growth.

Tools and Platforms for A/B Testing

The market offers a wide array of tools and platforms designed to facilitate A/B testing, ranging from free options for basic experiments to comprehensive enterprise solutions with advanced features. Choosing the right tool depends on your specific needs, technical capabilities, and budget. Understanding these options is part of a comprehensive understanding of what is A/B testing, a comprehensive guide to practical implementation.

Here are some popular and notable A/B testing tools:

- Google Analytics 4 (GA4): Following the deprecation of Google Optimize, Google’s experimentation capabilities are now integrated within GA4 and its broader ecosystem. This makes it an appealing option for small to mid-sized businesses already using Google Analytics, offering direct integration with analytics data for testing variations and measuring impact.

- VWO (Visual Website Optimizer): A long-time leader in the experimentation space, VWO offers a broad suite of testing capabilities including A/B, multivariate, and split URL testing. It’s known for its Visual Editor, allowing users to create variations without extensive coding, and features advanced targeting rules and funnel tracking. VWO is suitable for data-heavy agencies and those needing advanced multivariate testing.

- Optimizely: Often considered an enterprise-grade platform, Optimizely provides robust A/B testing, multivariate testing, and personalization capabilities. It caters to larger organizations looking for extensive experimentation features and scalability.

- AB Tasty: This platform offers an extensive set of features, including multichannel testing (web pages, mobile apps), tailored product recommendations, and advanced ROI analysis. AB Tasty is recognized for its personalization features based on user behavior and location.

- Convert Experiences: Convert is a privacy-focused A/B testing platform that supports A/B, multivariate, and split tests. It offers a Visual Editor and code editors for technical setups, along with advanced audience targeting options including device, geographic, behavioral, and custom JS conditions.

- Instapage: While primarily a landing page and conversion optimization platform, Instapage includes server-side A/B testing capabilities. It’s ideal for agencies focused on landing pages and conversion rate optimization (CRO) that need design, testing, and analytics in one tool, featuring built-in heatmaps and real-time analytics.

- Crazy Egg: Best known for its heatmaps and scroll maps, Crazy Egg also includes a basic A/B testing feature. It’s a user-friendly option for smaller teams or those new to optimization, offering good visual feedback.

- Unbounce: Similar to Instapage, Unbounce is geared towards landing page building and optimization, with integrated A/B testing functionality. It provides templates and a responsive interface for designing and testing pages, with some AI models for variant optimization.

- LaunchDarkly: Geared towards product and engineering teams, LaunchDarkly is a feature management and experimentation platform. It allows teams to run experiments through feature flags, gradually roll out changes, and test functionality at the code level, supporting backend, mobile, and infrastructure experimentation.

When selecting a tool, consider factors such as:

- Ease of Use: Does it require extensive coding knowledge, or does it offer visual editors?

- Features: Does it support A/B, multivariate, split URL, and personalization?

- Integrations: Does it connect with your existing analytics, CRM, or marketing automation platforms?

- Scalability: Can it handle your current and future traffic volumes and testing needs?

- Reporting and Analytics: Does it provide clear, actionable insights and statistical significance calculations?

- Privacy and Compliance: Does it adhere to data privacy regulations like GDPR?

By carefully evaluating these tools, businesses can equip themselves with the technology needed to execute effective A/B testing programs, reinforcing what is A/B testing, a comprehensive guide to available resources.

Potential Pitfalls and How to Avoid Them

While A/B testing is a powerful optimization tool, it’s not without its challenges. Numerous mistakes can undermine the validity of your tests and lead to flawed decisions. Being aware of these common pitfalls and knowing how to avoid them is crucial for anyone engaging with what is A/B testing, a comprehensive guide to successful experimentation.

1. Not Having a Clear Hypothesis

One of the most frequent mistakes is running tests without a well-defined hypothesis. Starting an experiment based on a vague idea or “gut feeling” makes it difficult to measure success or understand the underlying reasons for any observed changes.

- Solution: Always formulate a clear, testable hypothesis that outlines the change, the expected outcome, and the reasoning behind it. Base your hypothesis on existing data, user research, or identified pain points.

2. Testing Too Many Elements at Once

This is a classic error that invalidates results. If you change multiple variables (e.g., headline, image, and button color) in one variant, you won’t be able to pinpoint which specific change drove the performance difference.

- Solution: Test one variable at a time. This ensures that any observed changes can be directly attributed to the specific modification, providing clear insights. If you need to test multiple related changes, consider multivariate testing, which requires significantly higher traffic to reach statistical significance.

3. Stopping Tests Too Early (Pee-Peeing)

Often referred to as “pee-peeing” in the industry, this mistake involves ending an A/B test prematurely because one variation appears to be winning based on initial data. Early leads can often be random fluctuations, and stopping early means you might act on a false positive.

- Solution: Always let your test run for its predetermined duration and until it reaches statistical significance. A general guideline is 2-4 weeks to capture full weekly cycles and sufficient data. Use A/B testing calculators to estimate the required sample size and duration.

4. Ignoring Statistical Significance

Even if a variant shows a higher conversion rate, if the results are not statistically significant, there’s a high probability the difference is due to chance. Implementing non-significant “winners” can lead to negative or negligible long-term impacts.

- Solution: Understand and apply statistical significance correctly. Aim for a confidence level of at least 95%. Ensure your testing tool provides this metric or use a dedicated calculator. Focus on both statistical and practical significance; a statistically significant but tiny improvement might not be worth implementing.

5. Not Accounting for External Factors and Seasonality

Website traffic and user behavior are rarely constant throughout the year. Seasonal trends (e.g., holidays, promotional periods) or external events can influence test results. Running a test during an atypical period might yield results that don’t generalize to regular traffic.

- Solution: Be mindful of your business cycles and external events. Try to run tests during stable periods. If testing during an event is unavoidable, segment your data to understand the event’s influence or acknowledge the specific context of the results.

6. Testing Low-Traffic or Inconsequential Pages/Elements

A/B testing requires a sufficient volume of traffic to achieve statistical significance within a reasonable timeframe. Testing minor changes on low-traffic pages, or elements that have little impact on key business goals, can lead to prolonged tests or insignificant results.

- Solution: Prioritize high-traffic pages and elements that are critical to your conversion funnels and business objectives. Use analytics to identify pages with high drop-off rates or significant user interaction.

7. Not Documenting Test Results and Learnings

Failing to document past tests means you lose valuable institutional knowledge and risk repeating mistakes or overlooking insights.

- Solution: Create a comprehensive record for every A/B test. This includes hypotheses, variations, metrics, results, and especially the “why” behind the performance. Even failed tests offer learnings.

By proactively addressing these common pitfalls, businesses can ensure their A/B testing programs are robust, efficient, and consistently deliver valuable insights, solidifying their understanding of what is A/B testing, a comprehensive guide to responsible experimentation.

The Future of Optimization: Beyond Basic A/B Testing

The landscape of digital optimization is continuously evolving, and while traditional A/B testing remains a foundational practice, emerging trends and technologies are pushing the boundaries of what’s possible. These advancements offer more sophisticated ways to understand user behavior and refine digital experiences. Understanding these future directions is key to a truly comprehensive view of what is A/B testing, a comprehensive guide to the evolving world of experimentation.

Increased Automation and Enhanced Usability

One significant trend is the increasing use of automation in the testing process, which helps streamline efforts and reduce manual work. User-friendly interfaces with intuitive design elements are making complex testing scenarios more accessible, shifting experimentation back towards marketers and lessening the demand on developers. This focus on usability allows teams to run more experiments with greater efficiency, empowering them to continuously optimize without extensive technical expertise. This ease of use will further democratize what is A/B testing, a comprehensive guide to accessible experimentation.

Advanced Statistical Tools and Training

As experimentation becomes more central, there’s a growing adoption of sophisticated statistical tools and a greater investment in statistical training for teams. These tools provide a more comprehensive analysis of experiment data, enabling a clearer understanding of results. This emphasis ensures that teams can navigate the nuances of A/B testing and make more informed, data-backed decisions. The move towards deeper statistical understanding reinforces the scientific rigor of what is A/B testing, a comprehensive guide to advanced analytics.

Hybrid Experimentation (Client-Side & Server-Side)

With growing concerns around data privacy and compliance (like GDPR) and the limitations of client-side tracking, there’s a shift towards hybrid experimentation. This involves integrating both client-side (browser-based) and server-side testing solutions under a single platform. Server-side testing allows for more secure data collection and the ability to test deeper functionality, making it crucial for maintaining high-quality experimentation while protecting user data. This blend offers greater flexibility and control for complex testing scenarios.

Moving Beyond Basic Comparisons: Multivariate and Personalization

While classic A/B testing compares two versions, the future involves more complex forms of experimentation:

- Multivariate Testing (MVT): This allows for testing multiple variables simultaneously to understand how different combinations interact and affect outcomes. While it requires significantly higher traffic, MVT can uncover deeper insights than sequential A/B tests.

- Personalization: Leveraging comprehensive data, experimentation can become highly targeted. The ability to segment and test specific user groups based on detailed data profiles leads to more effective and personalized experiences. Personalized content blocks, for instance, can generate substantially higher impact. This shift from a one-size-fits-all approach to highly tailored experiences maximizes the impact of what is A/B testing, a comprehensive guide to individual user journeys.

Unified Experimentation Across Teams

Companies are increasingly breaking down silos between marketing, product, and engineering teams to foster a unified approach to experimentation. This collaboration ensures that all departments contribute to and benefit from a shared culture of data-driven decision-making, working towards building better digital experiences that are both user-centric and data-driven.

These emerging trends paint a picture of a more agile, data-rich, and strategically integrated future for optimization. By embracing these advancements, businesses can move beyond basic A/B testing to unlock even greater potential for growth and innovation.

Conclusion: Embracing Continuous Improvement with A/B Testing

In a digital world that is constantly shifting and evolving, the ability to adapt and optimize is not just an advantage—it’s a necessity. This comprehensive guide has explored what is A/B testing, a fundamental practice that empowers businesses to make informed, data-driven decisions rather than relying on guesswork. By systematically comparing variations of digital assets, from website elements to entire campaigns, organizations can pinpoint exactly what resonates with their audience and drives desired outcomes.

The journey through what is A/B testing, a comprehensive guide has shown, involves meticulous steps: forming clear hypotheses, isolating variables, creating precise variations, and running tests for sufficient durations to achieve statistical significance. The benefits are profound, leading to improved conversion rates, a deeper understanding of user behavior, enhanced customer experiences, and a reduction in the risks associated with implementing new changes. From optimizing landing pages and email campaigns to refining product features and advertising creatives, A/B testing provides the empirical evidence needed to succeed.

However, the power