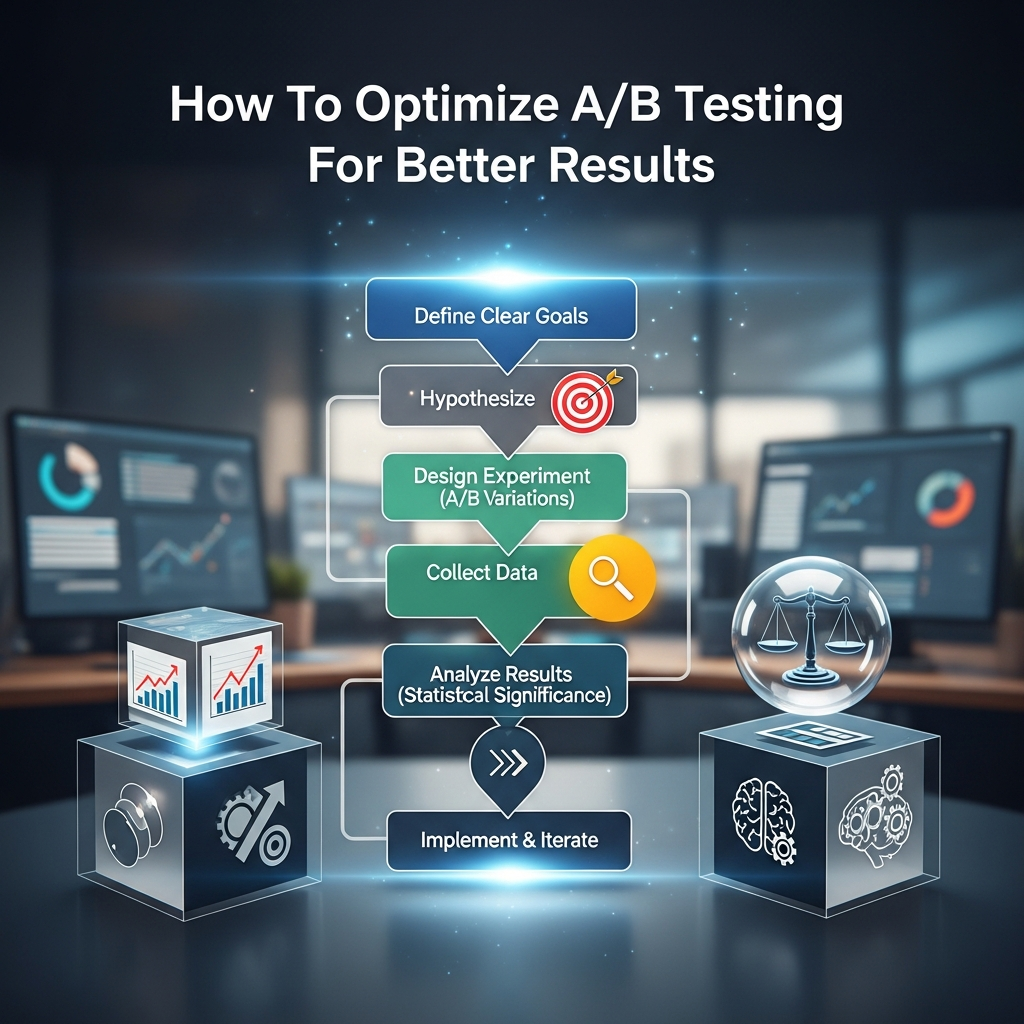

In the ever-evolving digital landscape, making informed decisions is paramount for sustained growth. Guesswork can be costly, leading to wasted resources and missed opportunities. This is where the power of experimentation, specifically A/B testing, becomes indispensable. To truly unlock its potential and drive meaningful improvements, understanding how to optimize A/B testing for better results is not just an advantage, but a necessity. A/B testing allows you to compare two versions of a digital asset – be it a webpage, an email, or an advertisement – to see which one performs more effectively against a specific goal. By systematically testing variations, businesses can move beyond assumptions and base their strategies on concrete data. This article will guide you through the latest best practices and strategies on how to optimize A/B testing for better results, ensuring your efforts translate into tangible gains and a deeper understanding of your audience.

The Foundation: Crafting a Robust Hypothesis

Every successful experiment begins with a clear, well-defined hypothesis. Without one, your tests can become aimless, making it difficult to extract actionable insights, regardless of the outcome. A strong hypothesis transforms testing from mere button-swapping into a structured learning process.

To effectively formulate a hypothesis for A/B testing, focus on these elements:

- Problem Identification: Pinpoint a specific issue or area for improvement based on data (e.g., high bounce rate on a landing page, low click-through rate on a call-to-action).

- Proposed Solution: Suggest a specific change you believe will address the identified problem.

- Expected Outcome: Predict the measurable impact of your change.

Reasoning: Explain why* you believe this change will lead to the expected outcome, ideally backed by existing data, user research, or psychological principles.

For example, instead of a vague “Let’s try a different headline,” a robust hypothesis would be: “If we change the headline on our product page to highlight the immediate benefit of ‘Save 20% Today’ instead of ‘Our Amazing Products,’ we will see a 15% increase in add-to-cart clicks, because emphasizing immediate value tends to motivate users more effectively.” This structured approach is fundamental to how to optimize A/B testing for better results.

A well-formed hypothesis ensures that your experimentation is focused and purposeful. It acts as an anchor, keeping your test aligned with broader business objectives and preventing you from running experiments in a vacuum. Even if a test “loses,” meaning your variant doesn’t outperform the original, a clear hypothesis still yields valuable learning. You understand why a particular change didn’t work, which can inform future tests and deepen your understanding of user behavior. This foundational step is critical for anyone looking for how to optimize A/B testing for better results.

Moreover, a strong hypothesis helps in preventing misinterpretation of results. When results align with expectations, it’s easy to fall into confirmation bias and roll out changes too quickly without deeper analysis. A clear hypothesis forces intentional interpretation: Did the result validate the underlying behavioral assumption? Did secondary metrics improve or degrade? Do all stakeholders agree on the findings? This disciplined approach is essential for continuously learning and improving, making it a cornerstone of how to optimize A/B testing for better results.

Setting Up Your Experiment for Success

Once you have a clear hypothesis, the next crucial step is to meticulously set up your experiment. Flawed setup can lead to skewed data and misleading conclusions, undermining all your efforts to understand how to optimize A/B testing for better results.

Isolating Variables: The Golden Rule

One of the most frequent mistakes in A/B testing is attempting to test too many changes simultaneously. When multiple elements are altered between version A and version B, it becomes impossible to definitively pinpoint which specific change, or combination of changes, was responsible for the observed outcome. If conversions increase, was it the new headline, the button color, or a different image? You simply won’t know.

Therefore, the golden rule of A/B testing is to isolate one variable per test. This means that your control version (A) and your variant version (B) should be identical in every aspect except for the single element you wish to test. For instance, if you’re testing the impact of a headline, everything else on the page – images, button text, layout – should remain consistent. This disciplined approach provides clear, unambiguous insights into how isolated changes impact your metrics, which is key to how to optimize A/B testing for better results.

While focusing on a single variable per test is ideal for clear insights, it’s also important to consider the overall user experience. Significant changes can disrupt user flow, potentially causing bounces even if the individual element being tested is “better.” Maintaining consistency in layout and content structure between variations helps ensure that users aren’t disoriented by radical differences, thus keeping the focus on the variable under scrutiny. This thoughtful design process is part of how to optimize A/B testing for better results.

Defining Clear Metrics and Goals

Before launching any test, you must clearly define what success looks like. What specific metric are you trying to influence? Is it a higher conversion rate, increased click-throughs, reduced bounce rate, or extended time on site? Your primary success metric should directly align with your business goals. If your overarching business objective is to reduce customer churn, then testing a button color on the homepage might improve engagement but could have no meaningful effect on churn.

Prioritizing elements that have a high impact on your key performance indicators (KPIs) is essential. Use existing analytics data to identify pages or elements that significantly influence user actions, such as your homepage, product pages, checkout process, or lead generation forms. Focusing your testing efforts on these high-traffic, high-potential areas will yield the most impactful improvements. For example, if your analytics reveal a high checkout abandonment rate, testing ways to simplify that process would be a high-priority, high-impact test. This strategic selection of what to test is a core aspect of how to optimize A/B testing for better results.

Beyond a primary success metric, consider tracking secondary metrics as well. While your primary metric might improve, a change could inadvertently negatively impact other important user behaviors. For example, a shorter form might increase lead volume (primary metric) but decrease lead quality (secondary metric). A holistic view of your data ensures that you make well-rounded decisions and truly understand how to optimize A/B testing for better results.

Determining the Right Sample Size and Duration

One of the most critical aspects of setting up an A/B test is determining the appropriate sample size and duration. Running tests on too small an audience or stopping them prematurely can lead to misleading results and false positives.

Sample Size Calculation: The required sample size depends on several factors:

- Current Conversion Rate: A lower baseline conversion rate generally requires a larger sample size to detect significant changes.

- Minimum Detectable Effect (MDE): This is the smallest percentage change (lift) you want to be able to reliably detect. A smaller MDE requires a larger sample size.

- Statistical Significance (Alpha): Typically set at 95% confidence (p-value < 0.05), meaning there’s less than a 5% chance the observed difference is due to random chance.

- Statistical Power (Beta): Commonly set at 80%, representing the probability of detecting a real effect if one exists.

Online sample size calculators (available from tools like AB Tasty, Statsig, or Optimizely) are invaluable for this step. They help ensure your test is adequately powered to detect meaningful differences. Without a sufficient sample size, even a genuine improvement might not reach statistical significance, making your results inconclusive. This precise planning is fundamental to how to optimize A/B testing for better results.

Test Duration: Equally important is allowing your test to run for an adequate duration. Stopping a test too early, also known as “peeking,” is a common mistake that can lead to false conclusions. Initial results can fluctuate wildly, showing early “wins” or “losses” that don’t reflect long-term performance. It’s recommended to run tests for at least one to two full business cycles (e.g., two weeks) to account for weekly variations in user behavior. Factors like weekdays vs. weekends, promotions, or even time of day can influence results, so capturing a full cycle helps normalize the data. This patience and adherence to predefined test duration are crucial elements in how to optimize A/B testing for better results.

Ensuring Technical Setup and Data Quality

The integrity of your data is the bedrock of any reliable A/B test. Compromised data, whether due to setup errors or issues during the testing phase, can distort results and lead to flawed decisions.

Proper Technical Setup:

Identical Pages Except for One Variable: Ensure that the technical implementation of your control and variant pages is robust and that the only* difference is the element you are testing. Inconsistencies can skew results.

- Full Functionality: Both versions of your test should be fully functional and load correctly across all browsers and devices. A broken variant will obviously perform poorly, leading to invalid conclusions.

- Random Traffic Distribution: Traffic should be split evenly and randomly between the control and variant to prevent sampling bias. Most A/B testing tools handle this automatically, but it’s important to verify.

- Tracking and Analytics Integration: Confirm that your analytics tools are correctly tracking data for both versions. Event mapping for all relevant elements should be accurate.

Data Quality Assurance:

- A/A Testing: Before launching a live A/B test, consider running an A/A test (comparing two identical versions). This helps validate your testing setup and ensures that your system reliably reports data when no actual difference exists.

- Monitoring Data in Real-Time: While you shouldn’t stop a test early, monitoring data in real-time can help catch any technical issues or anomalies that might skew results, such as a sudden drop in traffic or a tracking error.

- Cleaning Outliers: Sometimes, extreme data points (outliers) can disproportionately influence results. Developing a strategy to identify and appropriately handle these can improve data accuracy.

By meticulously addressing these technical and data quality aspects, you build a reliable foundation for your experiments, which is essential to how to optimize A/B testing for better results.

Executing and Monitoring Your Tests Effectively

With a well-planned setup, the execution phase requires diligence to maintain the integrity of your experiment and ensure you gather valid data to understand how to optimize A/B testing for better results.

Randomization and Control Groups

The core principle behind A/B testing is comparing two groups that are as similar as possible, with the only difference being the variable you are testing. This is achieved through randomization and the use of a control group.

- Random Assignment: Visitors should be randomly assigned to either the control group (seeing version A) or the variant group (seeing version B). This randomness helps ensure that any observed differences between the groups are due to the change you introduced, rather than pre-existing differences in the audience segments. Most testing platforms automatically handle this split, often at a 50/50 ratio.

- Clear Control Group: The control group is the unaltered version of your webpage or experience. It serves as the benchmark against which your new variations are measured. It’s crucial that the control group remains consistent throughout the test to provide a reliable comparison point. Any external variables influencing the control more than the variant can skew results.

Proper segmentation and targeting are also vital. Your test groups should be as similar as possible in terms of demographics, behavior, or other relevant characteristics to avoid unintended biases. Ignoring user segmentation can be a mistake, as an improvement for one segment might not hold true for another. Careful consideration of your audience ensures that the insights you gain are truly representative and applicable to your target users, refining your approach to how to optimize A/B testing for better results.

Avoiding Premature Stopping and “Peeking”

Patience is a virtue in A/B testing. As mentioned, stopping a test early, also known as “peeking” at the results, is a pervasive mistake. It’s tempting to declare a winner (or loser) when one variation appears to be performing significantly better or worse in the initial days. However, early results are almost always misleading.

This phenomenon is explained by “regression to the mean.” What looks like a strong performance in the short term might simply be a statistical anomaly that will normalize as more data is collected. Conversely, a promising variation might underperform initially by chance, leading you to abandon a potentially successful idea. The best way to mitigate this risk is to determine your adequate sample size and test duration upfront and then let the test run its full course without intervention. Sticking to your predefined duration ensures that you collect enough data to reach statistical significance, making your conclusions reliable. This steadfast approach is a key part of how to optimize A/B testing for better results.

Furthermore, changing traffic allocation percentages during the testing period can also skew your results until the data normalizes. Once a test is live, it’s generally best to avoid altering any parameters until the experiment is complete. If you identify a problem or think of an improvement during the test, make a note of it for future tests, but do not change the current live experiment. Such discipline is fundamental to how to optimize A/B testing for better results.

Handling External Factors

While you strive to isolate variables within your test, the external environment is rarely static. Various external factors can impact your test results if not accounted for.

- Seasonality and Trends: Business cycles, holidays, or seasonal trends can significantly affect user behavior. Running a test during a major sale event versus a regular period will yield different results. Ensure your test duration spans periods that represent typical user behavior or account for specific events if testing their impact.

- Marketing Campaigns: Concurrent marketing campaigns (e.g., email blasts, paid advertisements) driving traffic to your tested pages can influence results. Ideally, keep marketing activities consistent for both control and variant groups, or segment your test to analyze the impact on specific campaign traffic.

- News and Events: Unforeseen news events, competitor actions, or even technical outages can temporarily or permanently alter user behavior. While difficult to predict, being aware of such occurrences can help you contextualize unusual test results.

Monitoring these external factors and their potential influence is crucial for accurate interpretation of your test outcomes. Sometimes, an apparent “win” or “loss” might be attributable to an external event rather than your variation. By being vigilant about the broader context, you can gain a more nuanced understanding of your test results and better grasp how to optimize A/B testing for better results.

Interpreting Results with Statistical Rigor

After running your test for the predetermined duration and collecting sufficient data, the next critical step is to analyze the results accurately. Misinterpreting data can lead to implementing changes that do more harm than good.

Understanding Statistical Significance and Confidence Levels

Statistical significance is a cornerstone of A/B testing analysis. It helps you determine whether the observed difference between your control and variant is a real effect or simply due to random chance.

Confidence Level: This typically refers to the probability that the observed result is not* due to random chance. Most commonly, a 95% confidence level is used, meaning there is only a 5% chance that you would see such a difference if there were no actual difference between the two versions (a Type I error, or false positive).

- P-value: The p-value is the probability of observing results as extreme as, or more extreme than, the results you obtained, assuming the null hypothesis (i.e., no difference between versions) is true. If your p-value is less than your chosen significance level (e.g., 0.05 for 95% confidence), you reject the null hypothesis and deem the results statistically significant.

It’s vital to decide on your desired confidence level before the test begins and adhere to it when evaluating results. Many A/B testing platforms provide this confidence level, making it easier to interpret. This rigorous statistical approach helps ensure that decisions based on your A/B test are grounded in solid data, which is essential for how to optimize A/B testing for better results.

Beyond Statistical Significance: Practical Impact

While statistical significance is crucial, it doesn’t always equate to practical significance. A result might be statistically significant but have a very small effect size – meaning the difference between A and B is too small to warrant the effort or cost of implementing the change.

Consider the following:

- Effect Size: How large is the actual difference in performance between your control and variant? A 0.01% increase in conversion, while statistically significant, might not be practically meaningful for your business.

- Business Impact: Always tie your statistical findings back to your business goals. Will implementing this change genuinely move the needle on revenue, customer acquisition, or user satisfaction?

- Costs of Implementation: Weigh the potential gains against the resources required to implement and maintain the winning variation. A small lift might not justify a complex development effort.

Platforms often provide metrics like “probability to win” which can simplify interpretation. By considering both statistical and practical significance, you can make smarter decisions about which changes to implement, truly understanding how to optimize A/B testing for better results.

Common Pitfalls in Analysis

Even after successfully running a test, missteps in analysis can derail your efforts to understand how to optimize A/B testing for better results.

- Ignoring Secondary Metrics: As discussed, focusing solely on the primary metric can lead to implementing changes that negatively impact other important aspects of the user experience.

- Misinterpreting “Inconclusive” Results: Not every test will yield a clear winner. An inconclusive result simply means there wasn’t enough evidence to confidently say one version outperformed the other. It’s a learning, not a failure, and provides data to refine your next hypothesis. Don’t give up after one inconclusive test.

- Overestimating the Impact: Be realistic about the potential gains. Small changes rarely lead to revolutionary improvements. Avoid attributing massive success to minor tweaks without strong, long-term evidence.

- Failing to Document and Learn: Every test, regardless of its outcome, provides valuable insights. Document your hypotheses, methodologies, results, and learnings. This creates a knowledge base that prevents repeating mistakes and informs future experimentation. Building this collective intelligence is key to how to optimize A/B testing for better results.

Advanced Strategies to Enhance Your Experimentation

To truly master how to optimize A/B testing for better results, it’s beneficial to explore advanced techniques and strategic approaches that go beyond basic split testing.

Multivariate Testing: When to Use It

While A/B testing focuses on changing one element at a time, multivariate testing (MVT) allows you to test multiple variables simultaneously and understand how different elements interact with each other.

- Complexity: MVT is more complex than A/B testing, creating many combinations of variations. For example, testing two headlines, two images, and two call-to-action buttons would result in 2x2x2 = 8 different versions to test.

- Traffic Requirements: Due to the higher number of variations, MVT requires significantly more traffic to achieve statistical significance compared to A/B testing.

- Use Cases: MVT is ideal for high-traffic pages where you want to fine-tune multiple elements that you suspect interact, such as a landing page that has already been optimized through A/B tests. It helps uncover which specific elements or combinations have the biggest impact on conversion rates.

Choosing between A/B testing and MVT depends on your goals, traffic volume, and resources. Use A/B testing for quick insights on single, significant changes, especially with limited traffic. Employ MVT when you have high traffic and want to dig deeper into how multiple elements interact to find the optimal combination. Many organizations effectively use both methods, starting with A/B tests to validate individual changes, then moving to MVT for fine-tuning a high-performing page. This layered approach demonstrates sophistication in how to optimize A/B testing for better results.

Personalization and Segmentation

The modern digital landscape increasingly emphasizes personalized experiences. Generic experiences can be significantly outperformed by tailored content.

- Segment-Specific Tests: Tailoring experiments to specific user subgroups can uncover insights masked by aggregate data. For instance, testing a different homepage layout for new visitors versus returning customers, or for users from different geographical regions, can yield profound improvements.

- Personalized Content Blocks: Implementing user-specific content based on behavior, demographics, or past purchase history can generate higher engagement and conversion rates. For example, a retail site might display different product recommendations based on a user’s browsing history.

- Dynamic Pricing: Experimenting with flexible pricing based on demand, inventory, and customer segments can also be a powerful form of personalization.

Personalization in testing allows for refined segmentation, creating highly targeted experiments that maximize the impact of your campaigns and increase conversion rates. With the continuous advancement of testing platforms, personalization is becoming a key strategy for how to optimize A/B testing for better results.

Continuous Experimentation

Rather than viewing A/B testing as a one-off project, embracing a mindset of continuous experimentation is crucial for long-term growth. The digital world is constantly changing, and what works today might not work tomorrow.

- Iterative Testing: Successful optimization is an ongoing cycle of hypothesis generation, testing, analysis, and iteration. Don’t stop at one winning test; continually look for new opportunities to improve.

- Re-testing: What worked in the past might not hold true now. Periodically re-testing winning variations or key elements can ensure their continued effectiveness. User preferences and market conditions evolve.

- Long-Term Impact Analysis: Track the actual, long-term impact of implemented winning tests. Sometimes, a short-term win might not translate into sustained business value. This broader perspective is vital for how to optimize A/B testing for better results.

The Role of Tools and Platforms

The right tools can significantly streamline and enhance your experimentation efforts. Modern platforms offer sophisticated features that help you to better understand how to optimize A/B testing for better results.

- Experimentation Platforms: Tools like Optimizely, VWO (Visual Website Optimizer), AB Tasty, Kameleoon, Adobe Target, and Dynamic Yield are widely used platforms that offer comprehensive A/B and multivariate testing capabilities. They often include visual editors for easy test setup, robust analytics, and personalization features.

Behavioral Analytics: Integrating with tools that provide heatmaps, scrollmaps, session replays, and funnel analysis (e.g., Hotjar, Crazy Egg, Qualaroo) can offer qualitative insights into why* users behave the way they do. This rich contextual data can be invaluable for generating stronger hypotheses and understanding the underlying reasons behind test results.

- Statistical Features: Many advanced platforms now incorporate sophisticated statistical methods, including sequential testing (which can stop tests early if results are unlikely to be significant, saving resources) or bandit algorithms (which balance exploration and exploitation by shifting traffic to better-performing versions as the test runs). These features can help in how to optimize A/B testing for better results.

- Integration with Existing Stacks: Look for tools that integrate seamlessly with your existing marketing automation, content management systems, and customer relationship management platforms. This unified approach provides richer targeting capabilities and better alignment across teams.

Choosing the right tools based on your budget, ease of use, and required capabilities is a strategic decision that directly impacts how to optimize A/B testing for better results.

Building a Culture of Experimentation

True mastery of how to optimize A/B testing for better results extends beyond technical execution; it requires fostering an organizational culture that embraces data-driven decision-making and continuous learning.

Cross-Team Collaboration and Knowledge Sharing

Silos between different departments can hinder experimentation efforts. When marketing, product, and engineering teams collaborate, it leads to more innovative test ideas and a more unified understanding of customer behavior.

- Shared Goals: Aligning experimentation goals with broader business objectives ensures that everyone is working towards the same outcomes.

- Cross-Functional Teams: Experimenting with cross-functional teams, rather than specialized testing teams, can produce more innovative test ideas and help disseminate learnings throughout the organization.

- Centralized Knowledge System: Implement a system for sharing test results, insights, and recommendations. A centralized dashboard or regular team presentations can ensure that learnings are applied across different initiatives, maximizing the value derived from each test. This collective learning environment is a key aspect of how to optimize A/B testing for better results.

Documenting Learnings

Every experiment, whether it results in a “win” or an “inconclusive” outcome, generates valuable knowledge. Thorough documentation is essential for building an institutional memory of your experimentation program.

- Test Repository: Create a central repository where all hypotheses, methodologies, test setups, results, and actionable insights are stored.

Detailed Analysis: Beyond simply reporting the numerical results, document the why* behind the outcomes. Did the results validate the underlying behavioral assumptions? Were there any unexpected secondary effects?

- Recommendations and Next Steps: For each test, outline clear recommendations and identify future testing opportunities.

This comprehensive documentation prevents repeating past mistakes and allows new team members to quickly get up to speed on what has already been tried and learned, which is crucial for how to optimize A/B testing for better results.

Iterating and Scaling

The journey of optimization is never truly complete. The insights gained from one test should inform the next, leading to a continuous cycle of improvement.

- Optimize Winning Versions: Once a winning variation is confirmed, implement it fully. However, the work doesn’t stop there. Consider how to further optimize that new “control” version.

- Expand Tests: If a test performs well on one page or segment, consider expanding it to similar areas of your digital experience. For example, if a new call-to-action performs well on a product page, test it on other product pages or category pages.

- Scale Experimentation: As your business grows, your experimentation program should scale with it. This might involve running more tests, conducting more complex multivariate tests, or investing in more advanced platforms and talent. The ability to scale your testing efforts is paramount to continually understanding how to optimize A/B testing for better results.

Common Mistakes to Avoid in Your Journey

Even seasoned professionals can fall prey to common pitfalls when experimenting. Being aware of these traps is an important step in how to optimize A/B testing for better results:

- Testing Without a Clear Hypothesis: Random changes without a data-backed theory waste time and resources.

- Failing to Align Tests with Business Goals: Optimizing minor elements without considering their impact on overarching business objectives leads to optimizing in a vacuum.

- Testing Too Many Variables at Once: This makes it impossible to determine which specific change caused the observed results, polluting your data.

- Ignoring Sample Size and Statistical Significance: Running tests on too small an audience or stopping prematurely leads to misleading and unreliable conclusions.

- Not Accounting for External Factors: Changes in seasonality, marketing campaigns, or news events can skew results if not monitored and considered.

- Misinterpreting Results: Focusing only on statistical significance without considering practical impact or neglecting secondary metrics can lead to poor decision-making.

- Not Documenting Learnings: Failing to record hypotheses, methodologies, and outcomes prevents organizational learning and improvement over time.

- Giving Up After One Failure: Not every test will be a winner, but every test is a learning opportunity. Inconclusive results provide valuable data for future hypotheses.

- Testing Low-Traffic Pages: Pages with insufficient traffic will take an extremely long time to reach statistical significance, delaying insights and wasting resources.

By diligently avoiding these common mistakes, you can significantly enhance the effectiveness of your experimentation program and truly understand how to optimize A/B testing for better results.

Conclusion

A/B testing is a dynamic and powerful tool for driving continuous improvement across digital experiences. The journey to truly optimize A/B testing for better results is an ongoing process that demands a blend of careful planning, rigorous execution, astute analysis, and a commitment to continuous learning. By starting with strong, data-driven hypotheses, meticulously setting up experiments with isolated variables and appropriate sample sizes, and diligently analyzing results with statistical rigor and practical relevance, businesses can unlock significant gains. Embracing advanced strategies like multivariate testing, personalization, and continuous iteration, coupled with fostering a collaborative culture of experimentation, will empower teams to make confident, data-backed decisions. This systematic approach not only enhances key performance indicators but also deepens understanding of user behavior, paving the way for sustained success in the competitive digital landscape. Master how to optimize A/B testing for better results, and you’ll transform your digital strategy from guesswork into a precise, impactful science.

FAQ (Pertanyaan yang Sering Diajukan)

What is A/B testing optimization?

A/B testing optimization is the systematic process of refining experimentation practices to achieve more reliable, impactful, and actionable insights. It involves improving every stage of the testing lifecycle, from hypothesis generation and experiment setup to data analysis and result interpretation, ensuring that tests consistently yield meaningful improvements. This includes aspects like proper variable isolation, sample size determination, statistical rigor, and aligning tests with overarching business goals.

Why is a strong hypothesis crucial for A/B testing?

A strong hypothesis provides a clear, data-backed prediction for how a specific change will impact user behavior. It ensures focused testing, aligns experiments with business goals, and enables actionable insights regardless of the outcome. Without a strong hypothesis, tests can be random and may not yield clear learnings, making it difficult to understand the true impact of changes.